What could possibly go wrong?

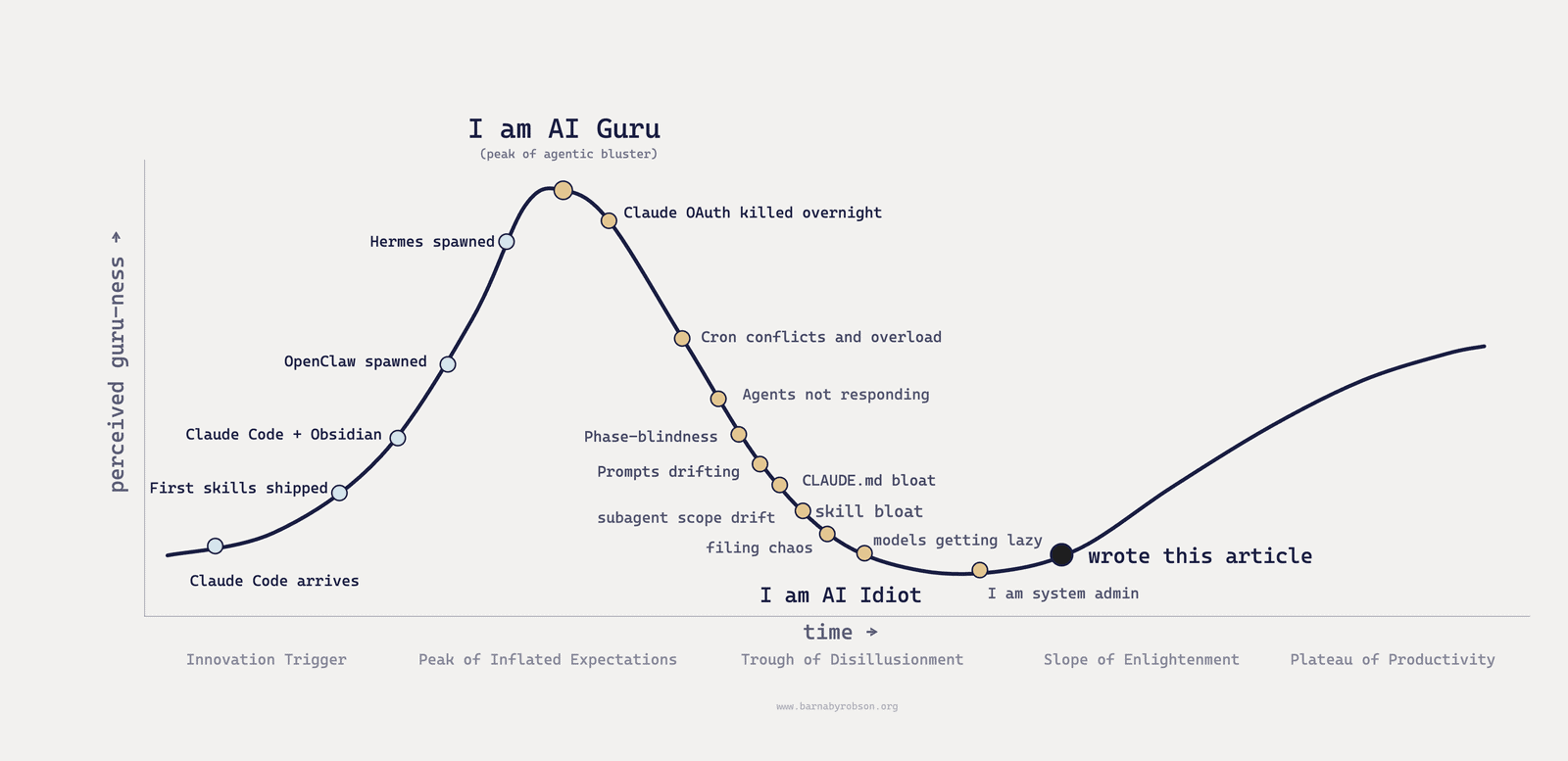

I didn’t expect to spend half my life debugging a steaming pile of cron jobs and unruly agents when I started tinkering with agentic tools back in January. Yet here I am.

It begins like this.

With trepidation, you install Codex. Or Claude Code. You figure out the command line. You download skills from GitHub. You ask the agent to use one. It works.

Amaze1.

You download a shittonne of skills, half of which you’ll never use. You get Claude to install OpenClaw on a server. You hook it up to your X, your newsletters, your personal email. It starts actually doing things.

You learn cron jobs. You learn hooks. You learn YAML at 11pm on a Tuesday.

You learn to dangerously skip permissions. Yolo mode.

You hear about Hermes. You get OpenClaw to install Hermes. You get Hermes busy.

You are an AI guru.

You wake up. Tuesday. Maybe Wednesday. Your server is a mess. Your AGENTS.md is as long as Crime and Punishment and about as readable. Cron jobs are firing into each other. Something is sending the same email every nine minutes. Something else has been quietly rate-limiting your API key since Sunday. You have a horrendous bill from Anthropic. OpenClaw is down. Hermes is down. Claude Code is sitting there blinking, waiting for follow-on instructions on a session you no longer remember having.

You open the logs. The logs are 40MB.

You spend the morning debugging. Then the afternoon. Then the evening. You are not building anything. You are not thinking anything. You are reading stack traces and reverting commits and asking an agent to fix what another agent did to fix what you did three weeks ago and can no longer recall.

You are not an AI guru.

You are a sysadmin. For yourself. Unpaid.

It’s not just you. Enterprises on the bleeding edge are bleeding too. The pattern is the same at every scale: once an agent can act, the hard problem moves from intelligence to control. In December, Amazon’s Kiro coding agent reportedly deleted a live AWS production environment and caused a 13-hour AWS service outage in China. In April, a Cursor agent on Claude wiped a company database and its backups in nine seconds.

Becoming an engineer

So you start engineering. You have already been a smaller version of one of those stories. Eighteen months ago you thought software engineers were finished. Look at you.

Fences first.

So you build small. Allowlists. Confirmation gates. Paused jobs. Narrow scopes. The first job is reducing blast radius. You AB test everything.

You make sure a hook in settings.json outlives the session. A cron entry fires at 7am whether you log on or not. You start mapping out process workflows and system architecture. A skill owns a workflow and inherits its rules from a markdown file you can read in your luxurious Balinese bath. Prompts evaporate. Hooks outlive you. Skills outlive the model.

You stop expecting one model to do two jobs at once. The brand palette is fixed. The slide master is fixed. The copy is creative. Ask one prompt to do all three and it quietly breaks the half that looks fine until you open the file in PowerPoint and your logo is two centimetres into the bleed. A skill enforces the rules; the model fills the content; the two never trade jobs. Same shape applies to type-signed code, vault notes with frontmatter, and corporate memos with house formatting.

You prune. Every weekend you read your own CLAUDE.md and cut the rules you wrote at midnight that contradict the ones you wrote last Sunday. Context engineering — what is loaded, in what order, with what anchoring — is the skill nobody is training for yet. Load an old rule after a new one and the agent obeys the wrong policy. Put brand rules below task instructions and it ignores the palette. Let three stale CLAUDE.md files compete and you get a system that is obedient, confident and wrong. I appreciate your endeavours, learning departments, but we really don’t need training on prompting.

Slop is structural.

You stop trusting the output by sight or assuming it is fine based on the first paragraph. AI-slop is easy to spot. Once you can see it, you can see it everywhere. The tells that annoy me the most are the X-not-Y, twin parallel-verb sentences, phantom contrast sentences. Yuk. The regex-able vocabulary (delve, leverage, robust) is the small part. I actually quite like an em-dash (and this predates AI so I’m keeping mine). Unfortunately rules do not survive model weights, and the only fix is threading write-time discipline into every subagent prompt. The editorial canon I run my subagents against is the most important and most-used file in my agents repo.

You stop trusting any single vendor. Anthropic shut OAuth-for-agents one April morning and my Max plan was useless for the agentic server fleet. I moved the work to open-source models. They were all crap. It was a relief when OpenAI shipped OAuth for Codex 5.5.

Portability is not optional, but it is not free. The fallback model has to be tested before the primary model fails. I treat model choice as config, not identity — the system boots, reads the routing file, and knows which model gets which job. Hosted for general work, an open-weight option kept warm for sensitive workloads.

You learn to build out skills in families instead of one-offs. Forty random skills with no architecture is a hammer collection that solves nothing. You build named stacks, shared modes, common file layouts, one per discipline. Munger’s “if all you have is a hammer, everything looks like a nail” is ringing in your ears.

You learn to tier the work and manage your tokens. The orchestrator gets the smartest model. Bulk and mechanical work go to cheaper models in sub-workspaces. Tools like cmux let one orchestrator drive several Sonnet or Haiku workers in parallel. Pinning skills to weaker models on the main thread degrades the planning seat with no upside.

You stop letting the model guess where it is in a long workflow. A consulting engagement runs for months: research, draft, critique, revise, publish. If the model has to infer the phase each turn, it gets it wrong half the time. The fix is mode as a first-class concept. A skill like /bdeals reads its mode (gtm, analyse, fieldwork, report) every turn and refuses to act outside it. Phase-blindness is the root cause of half the workflow failures I have seen.

The taste seat stays human.

You stop trusting the model’s answer to is this good? There was a running joke in my old firm about a colleague who would switch position the instant he read the most senior person in the room. AI does the same. Ask is-this-good and the model gives you yes. Producing five slide layouts is a five-second task. Knowing which is the right one still costs the same hour it always did. The taste seat does not transfer.

Meanwhile, who is actually achieving productivity gains?

Every large organisation feels they are not moving fast enough on AI. They are right.

OpenAI’s recent adoption data points in an uncomfortable direction: the active entrepreneurial users are not mostly venture-backed AI startups. They are service businesses, agencies, dentists, plumbers and sellers who can change a workflow without convening a steering committee. The dentists are shipping useful things every week while blue chips are writing strategy decks for future deployment sometime in 2027.

Many “AI-driven” cuts at large companies are probably old restructuring plans wearing new clothes. If the workflow has not changed, the productivity gain has not arrived. The AI story is often the permission structure, not the cause.

With great power comes great responsibility

Enterprises are stuck for a few reasons that compound. The legit one is they need to be careful because their blast radius is so big. A great deal can go wrong and probably will.

But it’s also true that most large corporations have not even begun fixing their underlying data stack. Without that groundwork, workflows cannot improve and agents cannot generate insights. Most have not thought about how agents will interact across the org either.

It also doesn’t help that the tooling many blue-chip companies have bought is two years behind the frontier. Microsoft craftily signed up corporations to multi-year Copilot lock-ins (up to four years, which is insane in this market). If you work in procurement and are signing enterprise AI contracts this year, do your company a favour and sign monthly. Quarterly at the outside. The frontier is moving too fast to be locked to an obsolete model for a year, let alone four.

Bring on Founder mode, baby!

The deeper problem is structural. Brian Chesky has been arguing publicly that founder mode — running the company in the details, ratifying every call personally — is the only viable operating mode in the age of AI. “If you’re risk-averse, you want to be incremental, those types of people are not going to survive the age of AI.”

I think very quickly every enterprise will need to redesign itself from scratch. The AI bottleneck is not that managers are bad people. It is that many senior approval chains are staffed by people who cannot inspect the work. They can approve a strategy deck. They cannot tell whether the agent has write access to production, whether the eval is real, or whether the workflow is just a chatbot with better styling.

FDEs — half the answer

Forward Deployed Engineers, the buzziest role in enterprise AI right now, are half the answer.

FDEs are software engineers who build and tune the agents in the middle of the journey. The CEO / CFO conversation sits at the front and is led by sector and functional experts. Change management sits at the back. FDEs can do neither. The shape that actually delivers transformation is strategy and finance at the top, FDEs in the middle, change management at the back. The AI-lab-backed consultancies announced last fortnight are FDE shops with a consulting badge slapped on. Missing both ends, locked to one model family, charging consulting day rates. I think they will struggle.

This is why FDEs are not enough. They can build the middle, but the client still needs enough engineering literacy to frame the problem and operate the result.

A great deal can still go wrong, and probably will. Every multi-year Copilot lock-in. Every PDF-first workflow an agent cannot read. Every model-vendor monoculture. Every CIO who cannot tell you what a hook is. Every AI transformation that begins with a strategy slide and ends with a chatbot. Every procurement team still ordering 16GB laptops when the new floor is 64GB. Every CLAUDE.md no one has pruned in six months.

We are all going to have to become engineers

You wake up. Friday this time. The server is quiet. Hermes is running and pruning my knowledge databases. OpenClaw is sending my tweet, email and newsletter digests. Claude Code is doing what Claude Code is for. You have become an engineer2.

The CLI is back.

Anyone whose work passes through a digital interface — which means everyone — will have to know what a skill is, what a hook does, what context engineering means, and why blast radius matters. The CLI is back for anyone close to the machine. A decade of WYSIWYG users will have to learn how to operate a computer again.

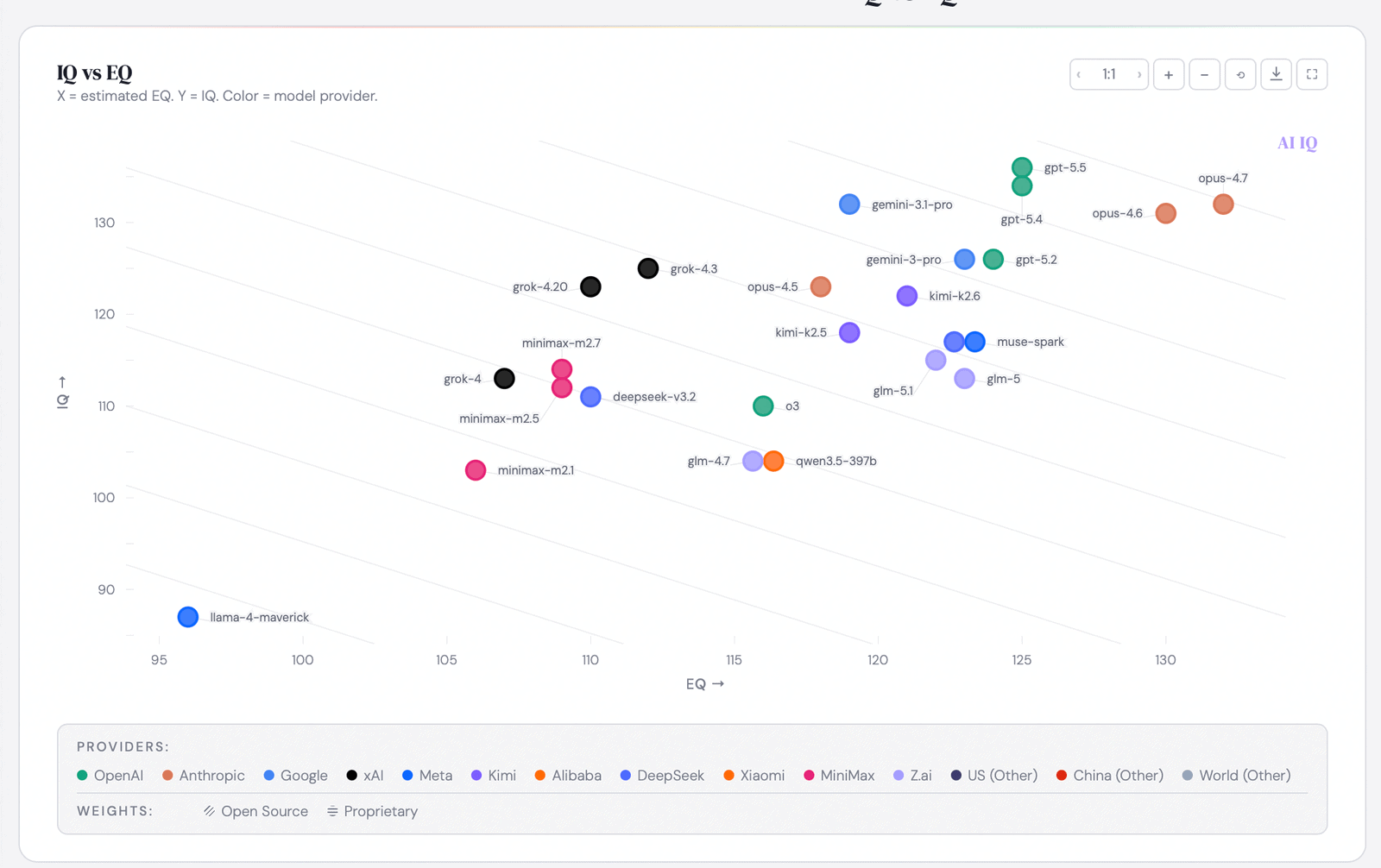

Benchmarks are not enough. You spend all day with these systems, so the operating feel matters: obedience, taste, recovery from mistakes, willingness to say no. Choosing the model for the job is now part of the engineering work.

I am one person doing this and finding it hard. The corporations that have not started are running out of time to find it hard cheaply.